Prompt Engineering for Deep Research: Health Systems Edition

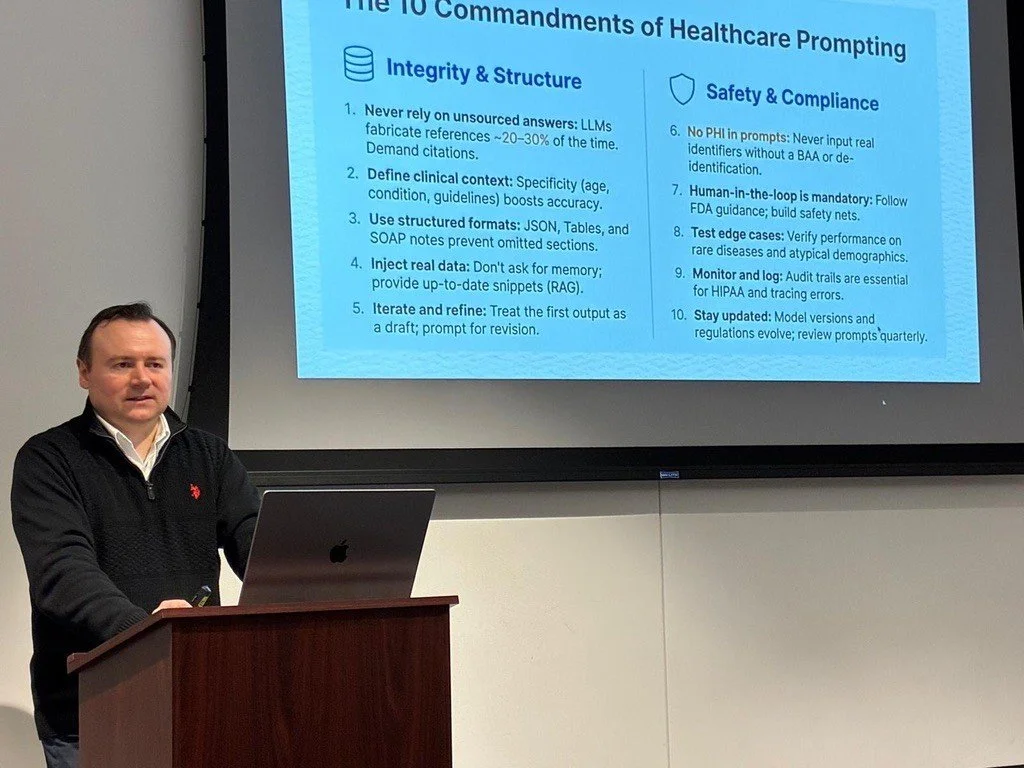

In our recent workshop “Prompt Engineering for Deep Research: Health Systems Focus,” Upskilling Labs Fellows (mostly healthcare professionals for this subset of the class), learned how to apply modern prompt-engineering techniques to produce AI outputs that are grounded, auditable, and safe.

The session was hands-on, using real health systems scenarios such as clinical trial summaries, patient data analysis, and clinical documentation support, and concluded with take-home labs so Fellows could continue practicing in their own environments.

A centerpiece of the workshop was the LLM (Large Language Model) Council Protocol, a multi-agent workflow that improves reliability by moving beyond one-shot prompting. Fellows learned to run the same prompt through multiple models in parallel, use anonymous peer critique to surface gaps, miss assumptions and potential hallucinations, and then synthesize the most rigorous, defensible final answer.

Why do it? In healthcare workflows, the cost of an ungrounded claim is high: it can mislead decision-making, contaminate documentation, or propagate errors downstream.

The Council Protocol approach helps teams reduce single-model blind spots, and counter confirmation bias, so outputs are not only higher quality, but are also more auditable and easier to verify. The impact is a repeatable process that produces answers that can be more reliable: clearer reasoning, fewer unsupported statements, and a built-in checklist for what still needs validation before anything is used in practice.

The idea is simple. Treat the model like it is presenting to a board of directors. Instead of relying on one LLM, the protocol:

Runs the same prompt through multiple models simultaneously

Has them critically review each other anonymously (reducing anchoring and groupthink)

Then synthesizes the best answer, with explicit assumptions and gaps

The aim is to reduce hallucinations, reduce bias and blind spots, and strengthen reasoning transparency.

Fellows applied that workflow alongside five prompt-engineering trends that are genuinely cutting edge in 2026 because they improve production reliability:

Context engineering: design a context budget, separate instructions from evidence, filter inputs into a compact Fact Pack before answering.

Contract-first outputs: define schemas/fields (plus citations) so results can be validated and repaired when they fail.

Evaluation-driven optimization: build a small “golden set” of healthcare cases and regression-test every prompt change.

Deliberate inference: generate multiple candidates, score with a rubric, and verify (retrieval, citation checks, tests) before shipping.

Security-first prompting: treat PDFs/web pages as untrusted, isolate them, enforce instruction hierarchy, use least-privilege tools.

If you’re using LLMs in healthcare systems, which of these would be applicable to your workflows today?

Full workshop slides presentation available for download:

https://drive.google.com/file/d/1zEmy_7M9UCpyAcxtRAP0zEm4IpBSRJnI/view?usp=sharing